The nyquist sampling theorem is a cornerstone of analog to digital conversion. It posits that to adequately preserve an analog signal when converting to digital, you have to use a sampling frequency twice as fast as what a human can sense. This is part of why 44.1 khz is considered high quality audio, even though the mic capturing the audio vibrates faster, sampling it at about 40k times a second produces a signal that to us is indistinguishable from one with an infinite resolution. As the bandwidth our hearing, at best peaks at about 20khz.

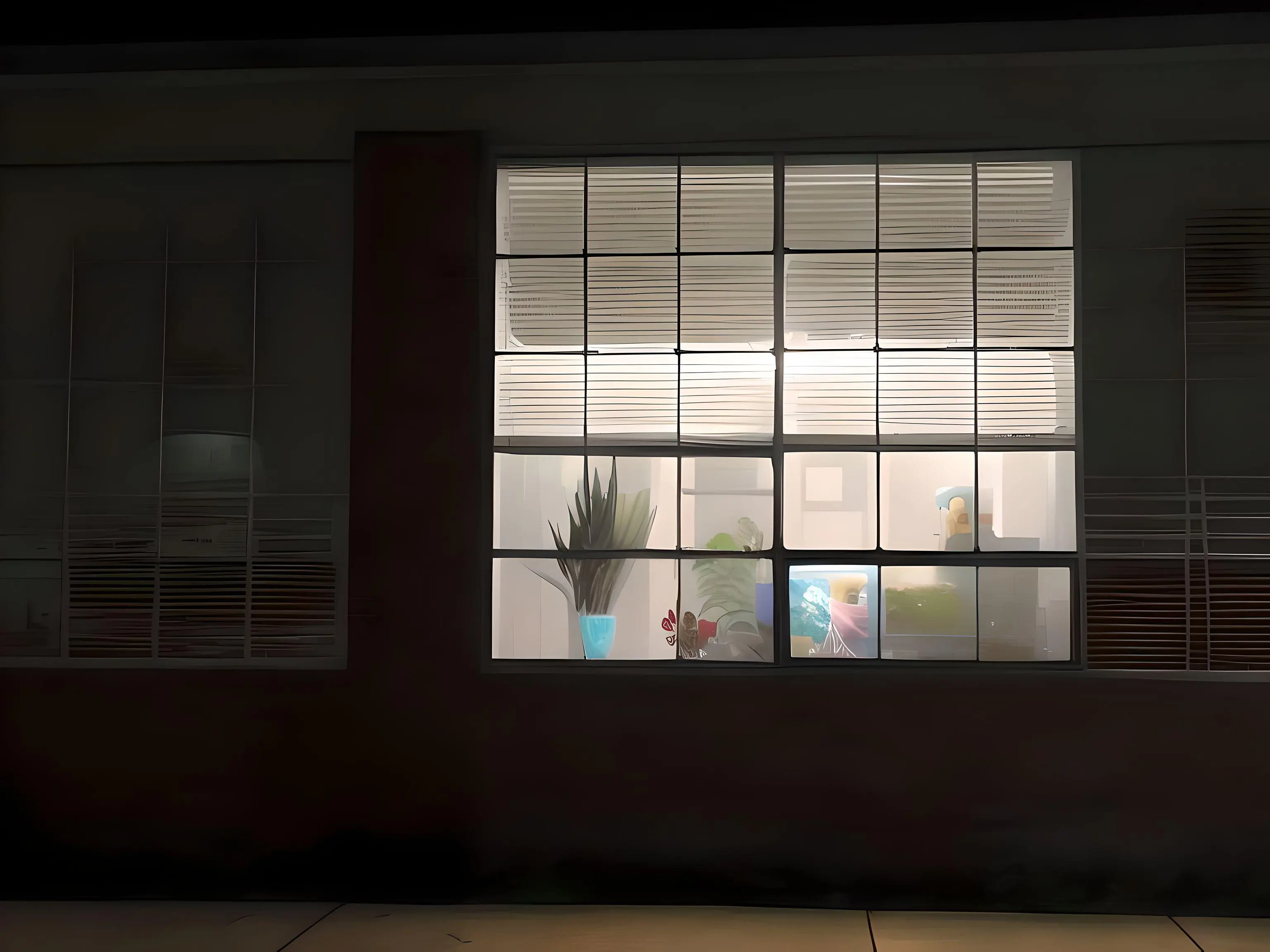

I’m no engineer, just a partially informed enthusiast. However, this picture of the water moving, somehow illustrates the nyquist theorem to me. How perception of speed varies with distance, and how distance somehow make things look clear. The scanner blade samples at about 30hz across the horizon.

Scanned left to righ, in about 20 seconds. The view from a floating pier across an undramatic patch of the Oslo fjord.

*edit: I swapped the direction of the scan in OP

Little nitpick. Nyquist frequency is at least 2x the maximum frequency of the signal of interest.

The signal of interest could be something like ~20kHz (human hearing or thereabouts) or it could be something like a 650 kHz AM radio signal.

Nyquist will ensure that you preserve artifacts that indicate primary frequency(ies) of interest, but you’ll lose nuance for signal analysis.

When we’re analyzing a signal more deeply we tend to use something like 40x expected max signal frequency, it’ll give you a much better look at the signal of interest.

Either way, neat project.

Double nitpick, according to Wikipedia, your definition is a “minority usage”. I teach signal Processing and hadn’t heard of that one, so thanks for pointing me to it!

Nyquist as half sampling rate is what I use

https://en.m.wikipedia.org/wiki/Nyquist_frequency#Other_meanings

Neat! I’ve definitely originated misunderstandings based on that. I wonder if it comes from my signals class lol

Little nitpick to you:

Nyquist will ensure that you preserve artifacts that indicate primary frequency(ies) of interest, but you’ll lose nuance for signal analysis.

When we’re analyzing a signal more deeply we tend to use something like 40x expected max signal frequency, it’ll give you a much better look at the signal of interest.

This is because your signal of interest, unless purely sinusoidal, has higher frequency features such as harmonics, so if you sample at Nyquist you’d lose all of that. Nyquist theorem still stands, it’s just you wanna look at higher frequency than you realize because you wanna see higher frequency components of your signal.

I’ll add that frequencies above the Nyquist point fold into lower frequencies. So you need to filter out the higher frequencies with a low pass filter.

This isn’t just for audio but any type of sampling frequencies. For example in video when you see wagon wheels going backwards, helicopter blades moving slowly, or old CRT displays where there is a big bright horizontal line sweeping. That’s all frequency folding around half the frame rate.

It also applies to grids in images. The pixels in a display act a sample points and you get frequency folding leading to jagged lines. Because a line segment is a half cycle of a square wave with a period of twice the length.

When that segment is diagonal it become a two dimensional signal with even higher frequency components in each axes. This leads to jagged diagonal lines. This is called aliasing as the higher frequencies have an alies as lower frequencies.

So when you apply antialiasing in video games it’s doing math to smear the signal along the two axis of the display. This makes a cleaner looking line.

I love the small insights into signal theory/processing generated by this image, this is really cool stuff! Thank you for chiming in.

Thanks! Your nitpicking is most welcome.

This one is excellent

Thanks, I agree, it’s one of my favorite shots from this camera.

So… what is Dayquist?

The fighter of the Nyquist.

Champion of the sun!

Master of karate!

And friendship!..for everyone!

Makes sense.

Just a minor addendum, 44.1 kHz wasn’t really ideal for human hearing; it’s not quite double the highest audible frequency for a lot of humans. The frequency was chosen for audio CDs because the data was recorded onto U-Matic video cassette tapes for duplication (if you watched the tapes back connected to a TV it looks like a black and white checkerboard but the squares switch rapidly and randomly) and 44.1 kHz was the most that could reliably fit on the tape. It worked well enough since most of the sounds recorded are under 22 kHz, but it’s a contributor of why some people say CD audio isn’t as good as a high-quality analog recording. A lot of non-CD digital audio like the audio for digital video or higher resolution digital audio prefers 48 kHz or a multiple of that, like 96 or 192 kHz.

I don’t have the time to write a well thought out reply, but CDs trump cassettes in terms of both dynamic range and noise floor. Records are worse than cassettes in both categories. I would personally trade a small amount of frequency response for the other two.

That said, people are… well people. Do what makes you happy.

I think the people who make those claims are usually referring to vinyl records, not cassettes. That said, I would not be surprised if the people who claim they can hear a difference are mostly imagining it. Or perhaps it’s something that dates back to the early days of CDs when the equipment and mastering techniques were not as good as they became later in the ’90s/’00s, not something applicable today.

I suspect, but it’s hard to find technical data, that the noise floor and dynamic range of vinyl are worse than cassettes.

You’re right that there’s a strong degree of interplay with other things though. Vinyl can really only be listened to indoors, which allows whomever is doing the mix to assume some things about the environment (it’s likely quiet for example). Portable media really kicked off the loudness war, which mean if you listen to a record (or tape or anything else) from say the 60’s or 70’s it will sound a lot different than the same album “digitally remastered” in the 00s.

Of course there’s some ancient broadcast standard at the bottom of 44.1khz, thanks for the clarification! (I work in film/TV and still struggle with explaining explaining ‘illegal’ values to some clients on certain deliverables)

I just had like three rounds of “Oh wow that’s more interesting than I thought” back-to-back.

Thanks! I’d love to hear your thoughts if you feel like sharing.

That’s really cool, the water looks like a Fourier transform.

In addition to scanning left to right, your scanmra must also have a decent readout delay for a given horizontal location top to bottom (or bottom to top?).

Excellent photo, as always. Water is a excellent subject for this type of camera. I wonder what a busy road at night would look like.

As for busy road at night, I’m afraid that with this way of doing scanning photography it would be quite uninteresting. The motion is way too quick, and cars would render as with either weird vertical lines or very small diagonal squiggles, depending on which direction you scan.

I suggest looking up some talks by the Italian photographer Adam Magyar, he’s done some great talks on transchronologal (?) photography, and is a great artist himself.

You might be surprised, especially if you find a busy multi-lane road. LED light on cars is generally PWM, so your camera will pick the strobing up. Add in strobes from multiple vehicles and it might even get interesting.

Thanks for giving me a name to go down a rabbit hole with!

I haven’t really considered that, I’m assuming the (in this case) vertical sampling is ‘global’, as in the values at each sensor site is locked at the same time and then read out from the serial bus.

If there was a delay, stuff like fluorescent lighting would read as a moire pattern, but I’ve only ever encountered streaking/linear distortion in those circumstances.

I think the ‘griddyness’ or general sense of direction in the water is purely a function of how water moves and not a result of readout delay.

I’d love to be proven wrong, though, so if I can do some experiment to determine either way, I’m all ears.

I’m only guessing on sequential sensor readout. It seems like cost would incentivize sequential readout, but then again that would make it hard for the scanner to move horizontally in a continuous sweep. You could try photographing something provided a strobe with crisp edges (eg not an incandescent light source that’s blinking).

And you’re totally right, the effect on the water could just come from sampling horizontal slices at a fairly fixed time interval. It just seems a bit too… “nice”? It is a very cool effect though.

Why is this called a “theorem”? It feels like a rule of thumb instead

It comes from mathematical interpolation. In order to find the frequency of a sine curve (and all signals are combinations of sine curves) you need at least two measured points per period. So the measurement frequency needs to be at least twice the signal frequency - at least of the parts of the signal you want to preserve. Frequencies higher than 22kHz are useless to our ears, so we can ignore them when sampling.

https://en.m.wikipedia.org/wiki/Nyquist–Shannon_sampling_theorem

That water looks all weird and wrong.

Agreed, it’s usually not that spiky

Looks like matted fur.

Or a wheatfield, definetly not water though…